Before I go through the execution of the ODI scenarios for the migration, I would like to empathize that any comment or opinion you read here should not drive your final decision at all. Using the migration or not is a decision that needs to be made based on many different factors.

I just want to put some lights on it and see how far I can get with it.

Being said that, here you have the third part of FDM to FDMEE Migration Utility posts.

To summarize the other parts:

- Part 1: Introduction to the migration utility and overview of main capability

- Part 2: Installing the migration utility

Let's start the rock n' roll!

Artifacts of my legacy FDM application

The FDM application I'm trying to migrate is a complete one and may have some particularities which will be discussed during this series.

- 2 target adapters but only one being used by current locations

- Several locations

- Logic Groups (Complex types)

- No Validation Entity Groups

- No Check Rule Groups

- Import format for delimited files

- Import scripts

- Mappings

- ...

When my target application is an EPMA application

When target application is deployed with EPMA, it must be registered manually in FDMEE before extracting the FDM artifacts.

Our target application is Classic so no need to register as the utility will create it in FDMEE.

Obviously, the migration utility assumes your target application(s) exist in your system :-)

Executing the migration: extracting FDM artifacts

We just have to execute the ODI scenario FDMC_EXTRACT_SETUP to extract legacy FDM artifacts and generate the new ones in FDMEE.

We open ODI Studio and logon into our FDMEE repository. The ODI scenario can be executed from Designer as below:

When executing the scenario, the prompt window for entering parameter values will be displayed:

- p_application_name: our target application name. This must be the same name as it is in our source environment. This is not the place where we will rename our HFM application so names will be the same.

- p_application_type: valid application types supported are CUSTOM, ESSBASE, HPL and HFM (upper case)

- p_application_db_name: not applicable to HFM. This is where we specify Planning/Essbase cube names.

For Essbase, we need to run the scenario once for each cube.

For Planning, we specify a comma separated list of 6 plans (Ex: BalS,IncS,,,,Plan6)

- p_prefix: although this parameter is optional, it is strongly recommended. When migrating multiple applications, there could be issues with duplicate names (Ex: two locations from two apps having same name).

Once values are entered, the following window will be prompted:

This is where we select our context, agent running the scenario, and the log level.

The context will be the one linked to the FDM application (database) we want to migrate. Although you can use the local agent, it's I prefer to use the FDMEE agent. And log level? always 5 so you get the max detail in ODI operator and the log produced by the utility.

After executing the ODI scenario I'm sure you go to ODI operator and start pushing Refresh, aren't you? :-)

And what can we see here? all steps executed in the ODI scenario.

Summarizing the different steps, the migration process executes the following tasks:

1. Refresh ODI variables (global adapter key, sub-query for target adapters, etc.)

2. Create target application in FDMEE

- Configure application options

- Configure application dimensions

3. Create source adapters

4. Extract Categories

5. Extract Global and application periods

6. Extract Logic Account Groups

- Logic Account items and item criteria

7. Extract Check Entity Groups (Validation Entity Groups in FDMEE)

- Entity Group items

8. Extract Check Rule Groups (Validation Rule Groups in FDMEE)

- Check Rule Group items

9. Extract Import Formats

- Import format definitions

- Import format for source adapters

10. Extract locations

11. Extract data mappings

I must say that I was surprised that I did not get any error when executing the scenario but...

Having green ticks means migration succeed?

A glimpse of FDMEE after the migration

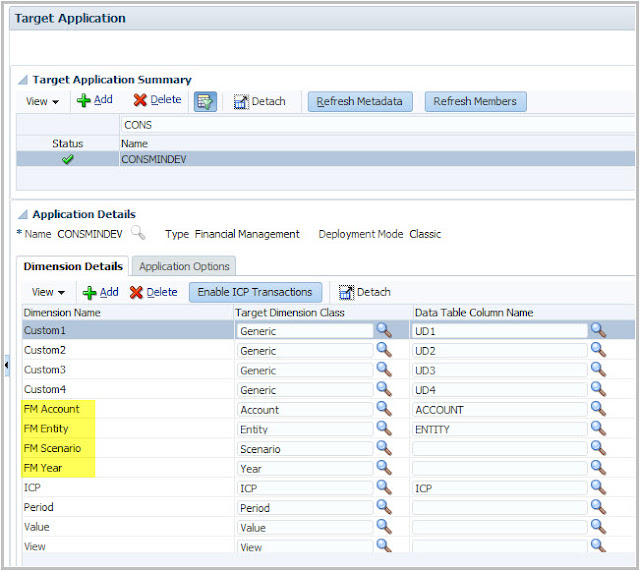

Let's start checking artifacts in FDMEE. I will start with the target application. Why? Because it's the first object being created (ODI step 15). That makes sense as all other objects in FDMEE depend on it.

The creation of the target application is done through step 15 to 23 and it basically creates the application and add the application options and dimensions based on target adapter configuration in legacy FDM.

Cool! the HFM application has been created in FDMEE and dimensions have been added. Do you see anything special?

Dimension names are created based on dimension aliases defined in FDM classic so if you did not update from common "FM dimension" pattern, then your dimensions will look like that. You would have to update aliases in FDM classic before running the migration.

I'm not going through application options right now so I will assume they were correctly imported from adapter options in FDM classic.

Let's have a look to other artifacts like locations or import formats:

Mayday, Mayday! we have a problem :-( no import formats were imported from FDM so actually something went wrong. Unluckily, having green ticks does not mean success.

Let's dive into the details! We may put some lights on it.

The log: fdmClassicUpgrade.log

Before making further investigations you should know that the migration utility produces a log which is stored in the temporary directory:

In that log we can see values for ODI variables being used during the migration. The funny thing is that the log stops after importing categories. Where is the rest then? ODI operator is your new best friend if you want to troubleshoot when some objects were not migrated.

Did you also get ODI scenario successfully executed but can't see your FDMEE artifacts?

You may want to troubleshoot, you may want to switch your approach and recreate them manually.

If you chose option 1, please keep reading.

Next stop: digging into ODI!